In the days of the y2k internet, I absolutely loved dollmakers. Commonly known as digital paper dollz – always with a Z – to the community. I’d spend hours creating combinations and eventually more complete scenes. I’d print out my creations to craft mixed media collages, magazine covers for my real-life dolls or album art for empty CD cases. I was obsessed (just as well, as it sparked me to learn a little HTML and Photoshop).

Recently I won an auction on eBay of some fashion lookbooks, and got a similar thrill while flipping through the pages. Though I don’t consider myself a fashionista, it’s hard not to appreciate the differences in 1920s and 1990s vibrancy or skirt lengths, the political undertones of a beret, or subculture more generally. I like to imagine that somewhere in the multiverse is a version of me doing urban photojournalism or street fashion photography, or even a version of me who is right now building the next generation of paper dollz.

Enter hybrid intelligence.

With all this talk about how ChatGPT and other tools are going to forever disrupt the creative industries, I wanted to try working with generative AI to design something from scratch. Specifically, I wanted to discover what I could accomplish in a single day.

Is generative AI truly a creative threat, or is hybrid intelligence a method of creative flow? How much work should we expect to be outsourced, or should we redefine what we expect “work” to mean from human hands? How much authorship remains uniquely mine if my co-authors are a series of chatbots or tools? To what extent is the creative process authentic and attributable to me vs machines? (I recently touched on this in regards to prompt writing.)

And I wanted to see how close to an answer to these questions I could uncover in a single day.

I figured I’d tackle this in stages:

- Create a text-based game concept (using WhyBot and ChatGPT)

- Build out game aesthetics and concept art (Midjourney, JukeBox)

- Game mechanics and general flow (WhyBot and ChatGPT again)

- Draft a pitch (Google Slides, Canva, ChatGPT, WhyBot)

Harajuku, but make it solarpunk

I went in with three ideas: Harajuku fashion, sustainability, and solarpunk. I thought I’d want to create a pitch for a doll maker or a game like Jet Set Radio, but with a fresh (and blank) Google Doc I was really open to anything.

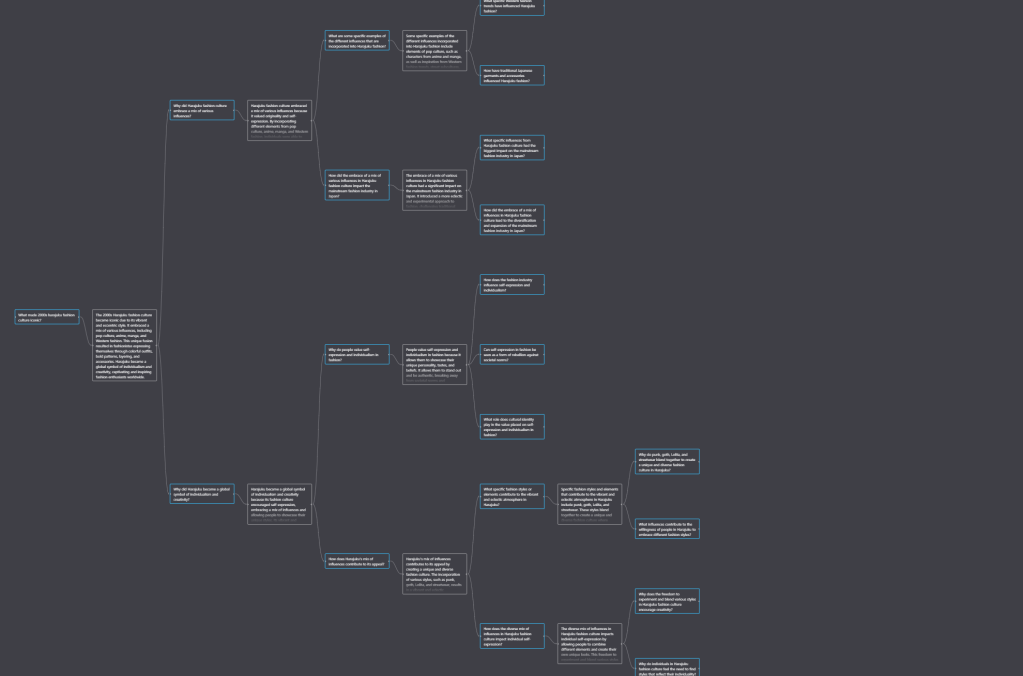

The first tool I tapped into was Whybot, which is basically ChatGPT but it does all the follow-up questions for you. (And, frankly, the mind-map style UI is exactly how my brain thinks. It’s much easier to follow for me than the linear conversations with ChatGPT!)

I asked:

→ What made 2000s harajuku fashion culture iconic? (I have my own aesthetic preferences and memory of this era, but I was curious on the general consensus from fashion history and cultural studies)

→ What are the central elements of sustainable fashion? (I wanted to include these in the game mechanics and features)

→ What are key themes or ideas in solarpunk? (I wanted the narrative of the game to draw inspiration here)

The results were truly interesting and helped me begin forming a more comprehensive idea of a game in my head – but it was also growing pretty rapidly. I needed to scale the idea back a bit and offer more succinct prompts for more succinct concepts.

I refined my setting (Neo-Tokyo), the hook or context (you, the player, are a member of a Shibuya-based gang which operates out of an abandoned apartment complex), and the beginning of an objective (create neat outfits… for some reason).

From there, I attempted to get a few possible plot summaries from ChatGPT. After a variety of prompts, I was mostly happy with the output from my prompt: “Create 5 short plot summaries for a mobile fashion game. Include a clear reference to the mood, solarpunk themes, futuristic Tokyo setting, and player character.”

I thought it was interesting that, when I clarified that the mobile game was about fashion, all subsequent generated summaries assumed that the player character was a stylist or designer in Tokyo. This wasn’t my intention and I needed to correct this often, as such a professional position kind of goes against the idea of being punk or alternative.

Some generated lines from ChatGPT that I particularly liked were:

- “Traverse the city’s dazzling districts and sculpt a future where fashion blooms alongside nature.”

- “Step into the shoes of a young urbanite in a Tokyo pulsating with solarpunk vitality.”

- “Join the Sunrise Chic Society, a group of fashion aficionados dedicated to blending innovation and style. Navigate the city’s bustling streets as a trendsetting eco-warrior.”

- “In a Tokyo characterized by lush greenery and awe-inspiring technology, you’re the driving force behind the VerdeVogue Revolution. Embrace your role as a solarpunk fashion activist, uniting cutting-edge fashion with eco-conscious design. Strive to make Tokyo a living embodiment of style and sustainability, and inspire a global movement towards a greener fashion industry.”

This had my attention.

It’s just an idea

I continued with this process of pulling pieces of outputs out and re-crafting my prompts to get as close to a 500 word plot description that I genuinely liked and felt was compelling enough to build from. I tried to keep my edits to a minimum at this stage, and focused entirely on volume of ideas.

Some of those ideas took the concept and the plot in unexpected directions. For example, one plot description generated the phrase “discover hidden stories within discarded items.” I marked this for keeps as it could provide some emotionally grounded mini stories or subplots.

Frequently, ChatGPT would make allusions to technology-infused fashion. I had thought of this a bit conceptually – at least in how I pictured the game or wanted the vibe to feel. But with Neo-Tokyo as the setting, I assumed this would be a given. Seeing this written out explicitly by ChatGPT affirmed that this is a common perception of Tokyo and its future, but also helped me begin scoping a level of the game: Akiba, where tech fashion can be acquired, learned, or unlocked.

As part of ChatGPT’s insistence on making the player be a professional stylist, fashion shows were suggested a number of times. At first, I discarded it as an idea I didn’t want. But as it kept popping up, I started to think through it in the back of mind. Perhaps as a game mechanic it didn’t hold much interest for me, but as an event or milestone or story beat? Why not? I added it to my Google Doc.

What I didn’t like from these outpost was the consistent pitting of the fast fashion industry as the central adversary or source of tension and conflict. “Fast fashion” as a villain doesn’t feel all that interesting without a character to represent it, not was I too interested in a game where corporations or chain retailers seek vengeance against the player. I asked for rewrites that were focused on circular economy, mutual aid, and a punk underground coalition vs the dystopian economy.

The (brain)storm is over

After 2 hours, I had been able to brainstorm a variety of ideas on various game aspects from a large volume of text generated by ChatGPT and WhyBot.

Most of my cognitive work went into pruning that text and refining my prompts. In the process of extracting bits I liked, I was able to weave together something that I wasn’t just satisfied with but also felt was mine. Which brought back the question: to what extent was that creative process authentic and attributable to me, vs these LLMs?

I don’t know the answer ethically, but I can say that the feeling I got wasn’t dissimilar from working with colleagues. WhyBot and ChatGPT were more intellectually rewarding than I expected. At work, my creativity gets into a place of flow when I’m collaborating. A small group of three or four people is the perfect size for staying in sync with one another, focusing on a few questions or problems, and ideating meaningfully. Assuming a healthy work culture, are our outputs in those settings posed specific questions of attribution?

In the same way we give credit to colleagues for an idea, phrasing, an insight, or design, these tools feel owed similar attribution.

As far as volume of artifacts produced, 2 hours of working within a hybrid intelligence method offered me a similar level of productivity as an individual to what I would’ve experienced in that room with those other colleagues. The output’s quality was fairly strong, the ideas were varied, and the pace – because of me as the prompter – was fully in my control.

Still, there’s value in something being co-created – which my video game so far was not. It would be a bit difficult to bring these outputs to a team without proper pitching. As it was developed in isolation with me and machine, I would need to really sell my hypothetical team on the game. Would I feel more or less emotional attachment to it if it were shot down? Would I be more or less open to criticism? Hm.

At the 2 hour mark, I decided to double down on my goal. I’m not trying to create a fully fleshed out game (that requires a team), but I am trying to use generative AI to create a pitch strong enough for that hypothetical team to be productive.

Visual feel and generating concept art

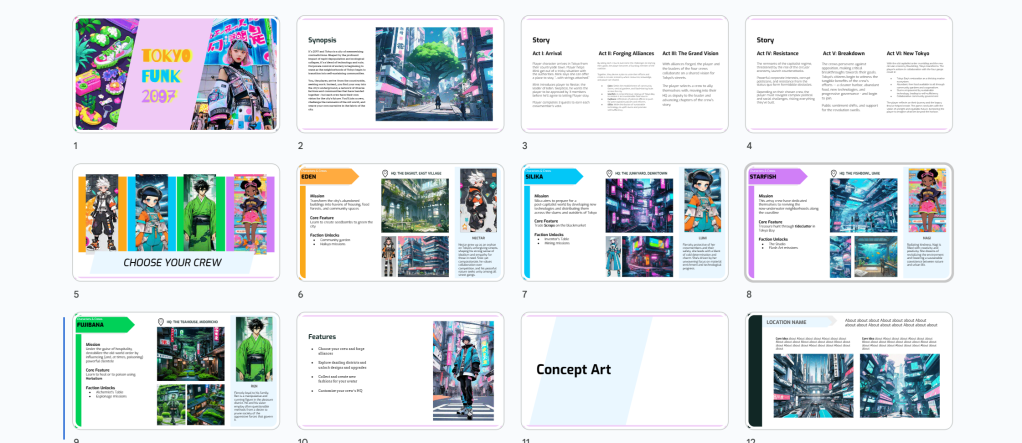

Before even touching an image generator, I wanted to be sure I had the aesthetics I wanted in my game defined by me – the human – first. So I made a quick Pinterest board and saved a bunch of inspiration there. I wasn’t too attached to any one image as a source of inspiration, but was hoping that some kind of combined aesthetic from them would be produced by the right prompts.

Next up was Midjourney. I had a ton of fun here – probably too much. Though the 100 free credits would have been more than enough for the scope of this project, I got really stuck into the possibilities. It didn’t take long for me to sign up and pay for 2000 credits. They got another customer from me with ease. I had hours of fun really pushing on variations of variations, getting them as close as possible to what I wanted.

Reprompting – with more specific requests for changes or palette consistency – felt a lot like searching on Google used to feel. Before Google became the clogged hellscape it is today of dense SEO and sponsored results, it paid to be really specific. Now, instead of finding what I want I can generate what I want – with honestly similar quality.

Despite this convenience, there was something weird about it too. In an era where everyone is a creator, there’s no shortage of content and media online. But that volume hasn’t necessarily brought with it much diversity. As designers have noted, there’s a certain conformity and sameness in all-things online from UI to captions.

The ethical implications of tools like Midjourney being trained on the work of real, human artists is a tricky one. OpenAi updated their own image generator tool, DALL-E 3, to now “decline requests that ask for an image in the style of a living artist.”

As reported in The Atlantic, we’ve just begun to wrangle with these ethics and artists are already losing:

“The language is a tacit response to hundreds of pages of litigation and countless articles accusing tech firms of stealing artists’ work to train their AI software, and provides a window into the next stage of the battle between creators and AI companies. The second sentence, in particular, cuts to the core of debates over whether tech giants like OpenAI, Google, and Meta should be allowed to use human-made work to train AI models without the creator’s permission—models that, artists say, are stealing their ideas and work opportunities.”

But what was interesting to me in using Midjourney was just how unsurprised I was by virtually anything I got from the generator. Character designs, simply by specifying “Harajuku” in any prompt, would undoubtedly contain color combinations of pink and blue. Any mention of “Tokyo” created cities with the distinct lack of green spaces so familiar to Tokyoites. Everything it provided looked familiar, without the professional polish.

This led me to question, though generative AI is an innovation, can it itself actually innovate?

Innovated innovation

This question might seem useless or purely philosophical, but a lot people are putting their hopes in LLMs as innovators and changemakers. In 2022, Boston Consulting Group found that 87% of global public- and private-sector climate and AI leaders believe that artificial intelligence will be a helpful tool in solving climate change. I’m not so confident.

I took the concept art I liked and mixed it with some of the generated text I liked too. I created my own pitch deck from there; aside from the art itself, I would say that 70% of the work felt uniquely mine and attributable to me.

In other words, LLMs generate things based on what we’ve done before. Creating something entirely new still requires human output and decision making.

While these tools allowed me to quickly generate many ideas, they did not once facilitate evaluation. I have no idea whether my game is a good idea or not. I didn’t speak to a single other human being about it (on purpose, that’s the point of this little project). Is there even a market for this game? Is there already one like it? (I did ask WhyBot these questions.)

Even with these tools at my disposal, I still hit a creative block. At the end of my second 3-hour chunk of time spent on this, I felt a bit unsure of what I wanted to explore next. I had put in six hours and had two left. Unsure what to do with them, I left them for another day.

Recommendations

For visual thinkers like me, generating the initial concept art helped me flesh out the story more. I was able to browse through hundreds of images that sparked moments of “Ooh, what if there were really moody twins who just obsessed over manners? And they ran all the crime gangs in town?” These opportunities to interface with visual variety felt a lot like a development partner that offered another perspective. It was similar to scrolling Pinterest for ideas, but far superior in that the interaction with the LLM felt more conversational.

That said, real creativity comes from reviewing and accepting constructive criticism, which generative AI only offers if asked – and even then, its answers may be lukewarm. I found that walking away from the computer for a coffee or shower thoughts allowed my ideas to actually evolve.

I completed my video game pitch in 1 day. Of the thousands of words and hundreds of images I generated, I used a fraction as inspiration and even fewer words directly from the AI. This felt like hybrid intelligence gone right. At the end, I’m not worried at all about what is attributable to me as author vs the machine; those questions of authorship feel different now that I’ve gone through this process. I’m happy to call this mine, with the help of some tools of course.

That said, I don’t think my using these tools has taken away work from any developers or artists. I’m happy with the output in the way that I’m proud of my first children’s book I wrote and illustrated in high school. A lot of sincerity was present in the idea, but it was lacking a professional polish.

For that, I’d want to partner with professionals. In this case, real game designers and artists – not Midjourney and OpenAI.

Leave a comment